Article

From Distributed Scheduler to WebAssembly: Secure Orchestration of AI Agents

Lessons learned while building SyncMyOrders.

Working on a SaaS product comes with many challenges, though it’s also interesting in many ways. One of the rewards of the process is being able to observe the evolution of the product, especially for things, hidden from end users of the product.

At AgileVision we built several similar integrations for a single customer. Each one was slightly different - new systems, field mappings, different triggers. As requirements shifted, we kept adjusting parameters, adapting configurations, tweaking logic. At some point it became obvious: we weren’t building bespoke integrations anymore. We were building the same thing over and over.

The natural conclusion was to make it configurable. A flexible solution to connect different systems, support different transformation logic, handle different workflows. But we could not rely on a rigid structure. We had to build a platform.

This decision, combined with experience of using workflow orchestration engines, and my passion for DAGs (Directed Acyclic Graphs), led to SyncMyOrders.

Phase 1 - The Distributed Scheduler

The pragmatic first step was to evaluate what already existed. Could we build on top of an existing orchestration foundation? We tried some of those. For sure, there are several powerful players on the market, which we could use as a foundation for our product.

Several things we noticed:

- Such solutions require a lot of resources for the underlying engine. Even a modest setup would require enormous amounts of memory and powerful processors to run a simple orchestration.

- Deployment of such solutions requires a lot of effort and often assumes you have to run Kubernetes cluster.

- Vendors often release their solution as an open-source, but they do everything to push you towards their cloud solution. For example, server versions compatibility in Temporal.io assumes you need to do incremental updates and doesn’t recommend skipping minor / patch versions. Taking into account new versions appear every month or so, it would force us into dealing with Temporal updates vs actually building the product.

We are fully bootstrapped, and we don’t have a luxury of throwing money at the problem. Instead, we had to be creative. Our goal was to have fully isolated tenant for each customer, which in case of “traditional tools” would mean a Kubernetes cluster per customer, a lot of supporting infrastructure, and high operating costs. Because of it, we used a bit different approach.

I’m originally from a Java world. I found a really stunning, yet not that popular library on GitHub - db-scheduler (https://github.com/kagkarlsson/db-scheduler). At this point we already had a DSL (domain-specific language) designed. The decision was to create our own as well, instead of using existing things like ASL (Amazon States Languages) or Serverless Workflows for two reasons:

- Our main concept is to keep things simple and both ASL and Serverless Workflows have more abstractions than we need.

- Both ASL and Serverless Workflows make assumptions about underlying resources in a way, and we wanted to have a DSL without any ties to compute layer specifics or transport layer specifics.

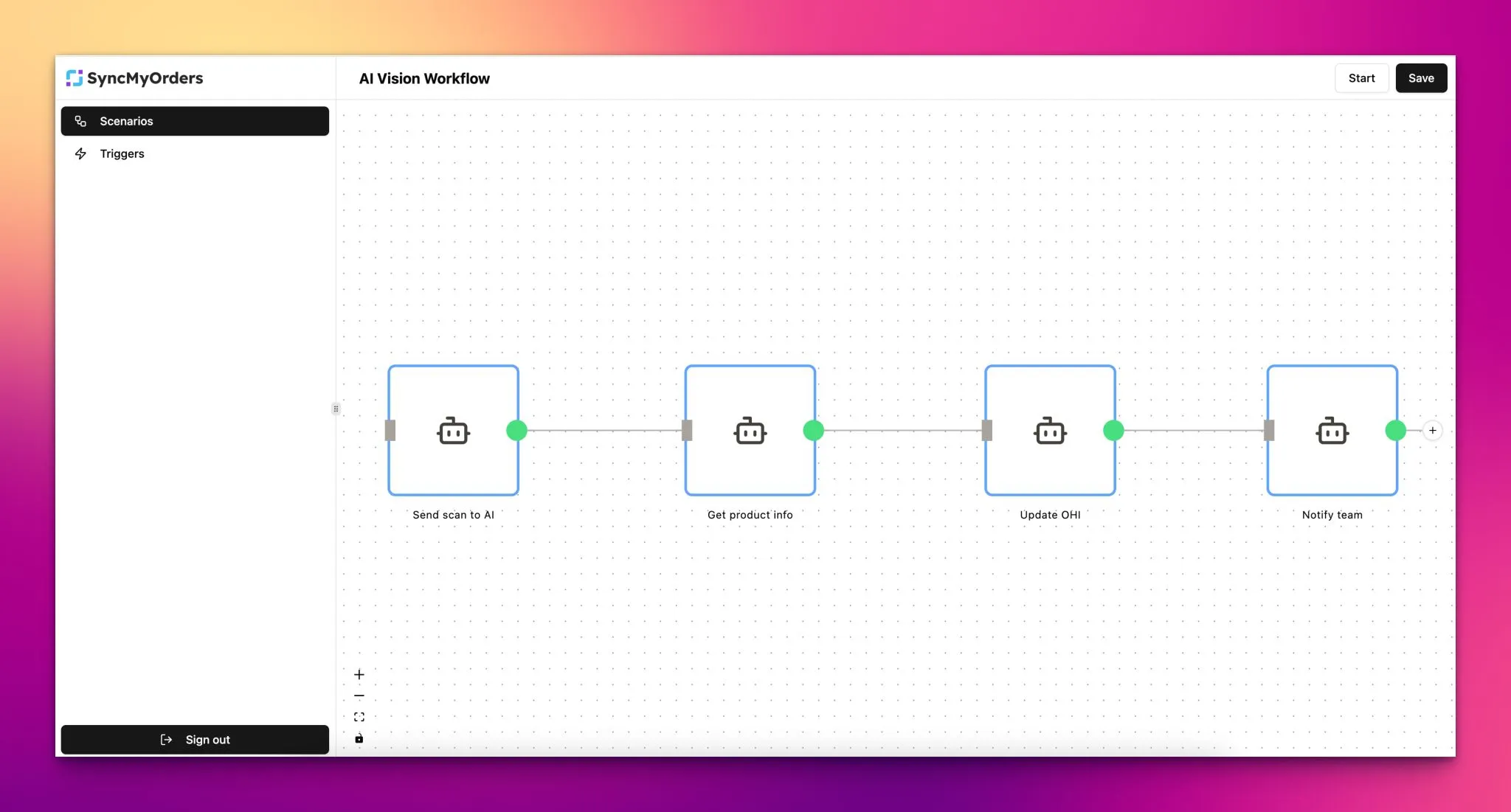

The first prototype was released. Initially, the platform was so simple, all the UI was in two tabs. It was enough to solve the problem for our first customer and verify the idea.

After our second customer, we faced two problems which would not go away.

The small-big data problem. Integration workloads often involve what I call “small amounts of big data” - or the reverse. A product catalog with 10K, 100K, or 1M items where you need to do something with each record. In a distributed scheduler architecture, you either batch it (complexity) or you schedule each item individually (enormous coordination overhead). Neither is clean.

The resource problem. To support our cases, we had to scale underlying resources, and of course got into the situation we were initially trying to escape: distributed orchestration requires a lot of system resources to be “fast enough”.

Phase 2 - A DSL That Compiles to Binaries

After some time and several brainstorming sessions, I came to a realization: if you look at an orchestration engine - or a no-code platform in general - implemented on a distributed scheduler, what you’re actually looking at is a distributed code interpreter running on top of databases and compute nodes.

You’re essentially modeling an execution environment that works exactly like a CPU. It allocates processing time to each node, collects results, merges them, orders them correctly. Philosophically speaking, you’re implementing a virtual machine on top of a database.

And that’s the moment it clicked: we don’t want to implement a compute layer on top of databases. Because if you think it through, that’s exactly what it becomes. The distributed nature hides it, but strip that away and you have a DSL being executed by a set of distributed nodes - you’ve implemented an interpreter on top of your database. You could even probably run DOOM on it, because you can run DOOM on anything. But the problem is efficiency. You’re using the underlying compute to emulate a virtual machine or interpreter, which means you’re using powerful, expensive machines to do simple things - and paying a lot for it.

That led me to a different conclusion: instead of implementing a distributed computing machine on top of other computing machines that cost a lot of money, we should keep the data distributed but keep the compute layer simple. Let it run directly on the native compute power of the server, utilizing it as much as possible. If the data is distributed properly, the computations themselves can be cheap.

The result: each workflow becomes a small, self-contained executable. No interpreter. No event queues. The “orchestrator” is the binary itself. We just needed a reliable persistence.

For durability - the ability to survive crashes and resume from the same point - we didn’t build something complicated. We built a small library on top of PostgreSQL. PostgreSQL is proven, it’s easily made highly available, and it’s already in the stack. Every layer uses tools that are already there rather than reinventing primitives.

In essence, we replaced the underlying distributed orchestration with a compilation pipeline. It takes workflows described in our DSL - a JSON-based format - transforms them into a Rust program, and builds that into a binary. The choice of Rust wasn’t random. Rust supports many target platforms, and I had something else in mind for the future: compiling not to a specific platform, but to WebAssembly. That’s why we eventually switched to Rust for code generation.

To make execution secure, we wrapped the binary in a rootless container. On top of that, using Linux capabilities and network namespace isolation, we wrapped that container in its own isolated network. What we ended up with were independent, high-performance workflows that require very little system resources to run.

This became RUNTARA - the underlying execution framework, an implementation of the durable execution paradigm.

As a side effect, we were able to solve something that had always bothered me. Most orchestration engines charge per execution - and for good reason, given the resources they consume underneath. Even on smaller plans, even on smaller deployments, they still charge you for every step or workflow invocation.

Switching to workflows-as-binaries allowed us to completely ditch that model. We don’t charge customers for executed workflows. They can use as many steps and as many invocations as they want. There are physical limits to the underlying compute environment, of course, but it lets us create very efficient, fully isolated deployments - without nickel-and-diming our customers.

Phase 3: Security as Architecture

The moment you’re handling business data across systems - ERP records, order data, customer PII - security becomes load-bearing, not cosmetic.

Our first pass at runtime isolation used rootless containers for each workflow execution, combined with network isolation layers. The compiled binary ran inside that environment. It worked well. Resource consumption was reasonable. But rootless containers still carry significant OS overhead. You can’t fit many isolated environments on a single host. And even rootless Docker provides more attack surface than we wanted.

Why Web Assembly

WebAssembly (WASM) is a platform-neutral virtual machine with a well-defined instruction set, a reasonable memory model, and - critically - a deny-by-default security architecture. You can’t do anything from inside a WASM module unless the host explicitly provides it as a capability.

This is exactly the property we wanted. Instead of trying to lock down a general-purpose container, we get a sandbox where the maximum blast radius is determined at compile time by what host functions we expose.

WebAssembly has also passed the credibility threshold. Cloudflare runs their edge workers on it. Shopify uses it for third-party app isolation. The ecosystem is real and growing - on both backend and frontend.

The frontend part matters more than it might seem at first. The same scenario that compiles to a WASM binary for server-side execution can run in a browser tab. We’ll come back to that at some point.

We wanted capability-based security: an environment where we could whitelist exactly what a workflow is allowed to do, and nothing more. The threat model isn’t primarily external attackers - it’s the inherent risk of executing logic that was, at some level, shaped by user configuration. That led us to WebAssembly.

WASM Challenges

WebAssembly’s standardization process moves slowly. Features arrive as proposals, become experimental, and take years to reach stable status. We deal with those constraints daily. WASI (the system interface standard) is improving, but there are real limitations on what you can do inside a WASM environment today.

We work around most of them. For anything a scenario needs from the outside world - HTTP calls, credential access, storage - we provide host functions.

The scenario never touches the network directly; it calls back to the host, which performs the operation and returns the result. This constraint turns out to be a feature, not just a limitation. Just a very annoying feature. I tell it myself every day.

The AI Agent Connection

When everyone started handing over their computers to language models and chaotically purchasing Mac Minis, a structural problem became visible: prompt injection.

A model can’t distinguish between instructions and data. A username and an injected command look identical at the token level. There’s no patch for this - it’s the fundamental nature of how these models work. As long as user input reaches a model, prompt injection is a real attack surface.

The standard response has been defensive prompting - wrapping user inputs, instructing the model to distrust certain content. It works until it doesn’t. There will always be a prompt that bypasses the one protecting you from injection. We took a different approach: accept prompt injection as a given, and design the consequences to be bounded.

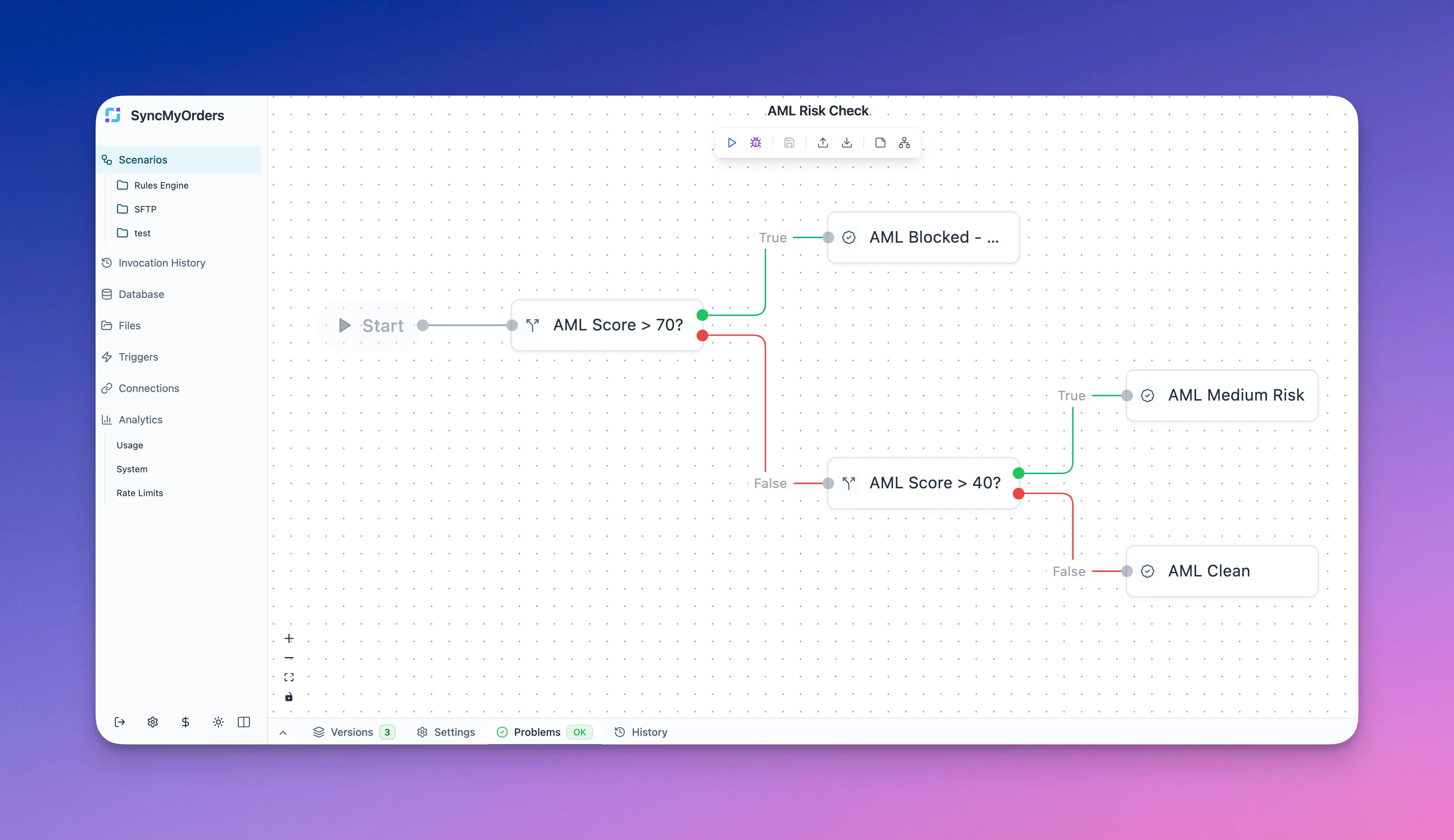

Here’s the observation that shaped it. Most business automation doesn’t need a fully undetermined agent that can do anything. It needs a predictable process with smart decision-making at specific points. An agent that can do everything effectively does nothing useful - the solution space is too large.

So we asked: what if an AI agent’s tools were pre-bound at configuration time, compiled into a WASM module, and run inside the sandboxed execution environment?

The result is an agent with:

- A finite, enumerable set of capabilities.

- No access to anything outside those capabilities, regardless of what the model decides to do

- An observable surface - we can hook before and after every tool call, monitor prompts externally, and add guard layers that operate outside the model’s context.

Prompt injection still possible. But the maximum damage is now a function of what tools we decided to give it, not what the attacker manages to convince it to do.

This is the “AI-configured, deterministically executed” model in practice. The agent has real intelligence and real tool-calling capability. The execution environment constrains what intelligence can actually touch.

What’s next

At this point, we have all the primitives needed to solve most customer problems in the integration and data synchronization space. We are actively expanding into new areas while using the platform daily to solve real customer challenges.

One significant shift came with the maturity of large language models. We now generate around 95% of automations directly from customer requirements - no manual workflow editing required. Customers can use their preferred AI tool to update their automations. This reduced deployment time substantially and cut the effort needed to produce proof of concepts for new customers to almost nothing.

We are careful about AI in general - careful about where it actually adds value versus where it’s noise. But we are actively using it, actively building with it, and I think that’s the right direction. Our goal is to make SyncMyOrders a safe execution platform for AI agents: one that combines the best of both worlds - deterministic workflows with smart decision-making, but also self-healing workflows that can adjust themselves automatically when something breaks or drifts.

That’s the next step. Right now you still have to tell the AI to fix your workflow. The end goal is automations that evolve on their own - and once they stabilize, lock into deterministic execution that you can trust.